Integrating MLOps With IoT

- By Shikhar Kwatra, Utpal Mangla, Vinod Bijlani, and Mathews Thomas

- February 28, 2022

With the advancement in AI, the operationalization of machine learning and deep learning models has become a key focus area for machine learning.

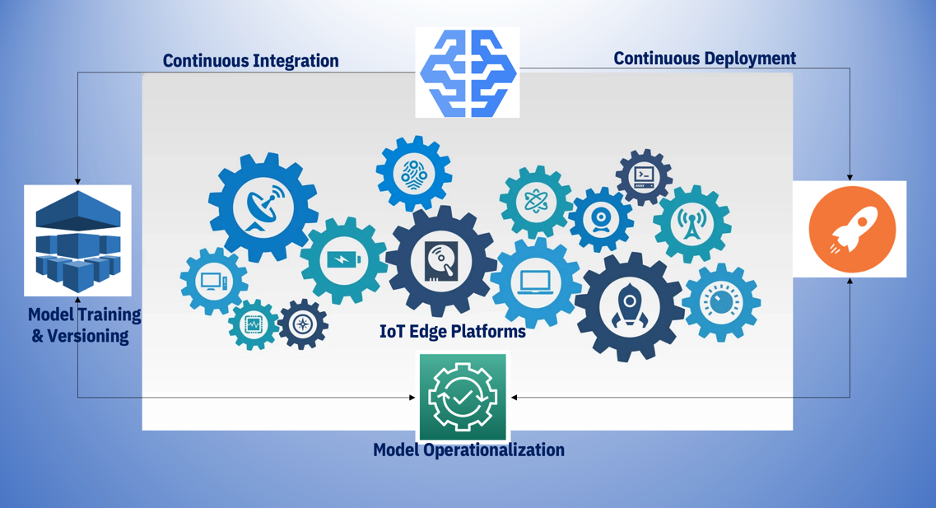

In a typical scenario within an organization involved in machine learning or deep learning business cases, the data science and IT teams need to extensively collaborate to increase the pace of scaling and pushing of multiple machine learning models to production through continuous training, continuous validation, continuous deployment and continuous integration with governance.

MLOps has carved a new era of the DevOps paradigm in the machine learning/artificial intelligence realm by automating end-to-end workflows.

As systems optimize the models and bring the data processing and analysis closer to the edge, data scientists and ML engineers continuously find new ways to push the complexities involved with the operationalization of models to such IoT edge devices.

A LinkedIn publication revealed that by 2025, global AI spending would have reached USD232 billion and USD5 trillion by 2050. According to Cognilytica, the global MLOps market will be worth USD4 billion by 2025. This market was worth USD350 million in 2019.

Models running in IoT edge devices need to be very frequently trained due to variable environmental parameters, wherein continuous data drift and limited access to such IoT edge solutions may degrade the model performance over time. The target platforms on which ML models need to be deployed can also vary, such as IoT Edge or specialized hardware such as FPGAs, which leads to a high level of complexity and customization regarding MLOps on such platforms.

Models can be packaged into docker images for deployment post profiling the models by determining the cores, CPU, and memory settings on said target IoT platforms. Such IoT devices also have multiple dependencies for packaging and deploying models that can be executed seamlessly on the platform. Hence, model packaging is easily implemented through containers as they can span over both cloud and IoT edge platforms.

When we are running on IoT edge platforms with certain device dependencies, a decision needs to be taken on which containerized machine learning models need to be made available offline due to limited connectivity. An access script to access the model, invoke the endpoint, and score the request incoming to the edge device needs to be operational to provide the respective probabilistic output.

Continuous monitoring and retraining of models deployed in the IoT devices need to be adequately handled using model artifact repository and model versioning features as part of the MLOps framework. Different images of the models deployed will be stored in the shared device repository to quickly fetch the right image at the right time to be deployed to the IoT device.

Model retraining can be triggered based on a job scheduler running on the edge device or when new data is incoming, invoking the rest endpoint of the machine learning model. Continuous model retraining, versioning, and model evaluation become an integral part of the pipeline.

In case the data is frequently changing, which can be the case with such IoT edge devices or platforms, the frequency of model versioning and refreshing the model due to variable data drift will enable the MLOps engineer to automate the model retraining process, thereby saving time for the data scientists to deal with other aspects of feature engineering and model development.

The rise of such devices continuously collaborating over the internet and integrating with MLOps capabilities is poised to grow over the years. Multi-factored optimizations will continue to occur and make the lives of data scientists and ML engineers more focused and easier from a model operationalization standpoint via an end-to-end automated approach.

This article was jointly written by Shikhar Kwatra, IBM’s data and AI architect; Utpal Mangla, IBM’s general manager for industry EDGE Cloud on IBM Cloud Platform; Mathews Thomas, IBM’s distinguished engineer; Vinod Bijlani, an AI and IoT expert who contributed to the design and implementation of various smart city projects.

The views and opinions expressed in this article are those of the author and do not necessarily reflect those of CDOTrends. Image credits: iStockphoto/metamorworks (front image); IBM (diagram)