Improved, New AI Models Are Pushing the Boundaries of Possibility

- By Paul Mah

- April 06, 2022

Despite recent arguments about how deep learning is fundamentally a technique for recognizing patterns and only capable of generating “rough-and-ready” results, it appears that the first few months of 2022 have brought us some pretty jaw-dropping progress in the field of AI.

Filling in the blanks

For instance, OpenAI last month released new versions of GPT-3 and Codex that adds the ability to insert content into existing text (for GPT-3) and edit code (for Codex).

For the uninitiated, GPT-3 is an autoregressive language model that uses deep learning to produce rather impressive human-like text or conversations. GPT-3 traditionally adds text to the end of existing content, generating new copy or code based on what came before.

The new “insert” capability allows the models to add text anywhere, changing what’s currently there or adding text to the middle of content. Crucially, it adds the contextually relevant text in the middle of existing content, effectively elevating GPT-3’s functionality to an entirely new level.

In a nutshell, GPT-3 can fill in the blank around an outline with two disparate sentences, parsing them for the context required to create new copy while also tying the two sections together.

Or as explained on the OpenAI blog: “[This] can improve the quality of completions for applications such as writing long-form text, transitioning between paragraphs, following an outline, or guiding the model toward an ending.

The “edit” capability is also proving useful for writing code, allowing for the automation of tasks such as refactoring, adding documentation, or even translating between programming languages.

In an example shown, Codex reacted to instructions to convert a static text into a list, then into YAML format, and finally to JSON ordered by defined fields. While mundane, the ability to do these automatically can save programmers precious time and reduce the likelihood of human errors that end up as software bugs.

More efficient than GPT-3

Speaking of GPT-3, the Allen Institute for AI (AI2) earlier this year demonstrated a model that it claims performs on par or better than GPT-3 when it comes to answering questions, and at a tenth of GPT-3’s massive footprint of 175 billion parameters.

Called Macaw, it emerged from research into creating an AI that performs at human levels on standardized tests, reports TechCrunch.

And based on exchange samples furnished by the team, it appears to provide better answers to a variety of questions, including tricky ones that GPT-3 stumbles over. Some examples:

Question: How would you make a marriage stronger?

Macaw: Communicate

GPT-3: I would make sure that I was the one who was always right.

Question: If a bird didn’t have wings, how would it be affected?

Macaw: It would be unable to fly

GPT-3: It would be a bad bird.

Question: How does a snake move around?

Macaw: By slithering on all fours

GPT-3: Snakes move by using their muscles to push against the ground. They can move in any direction, but they cannot move backwards.

Pro tip from the editor here, I’ll probably ignore GPT-3’s suggestion for a stronger marriage.

To be clear, AI2 head Oren Etzioni told TechCrunch that Macaw was not meant to be a GPT-3 replacement, but is an ongoing research project meant to advance language generation and understanding around the world.

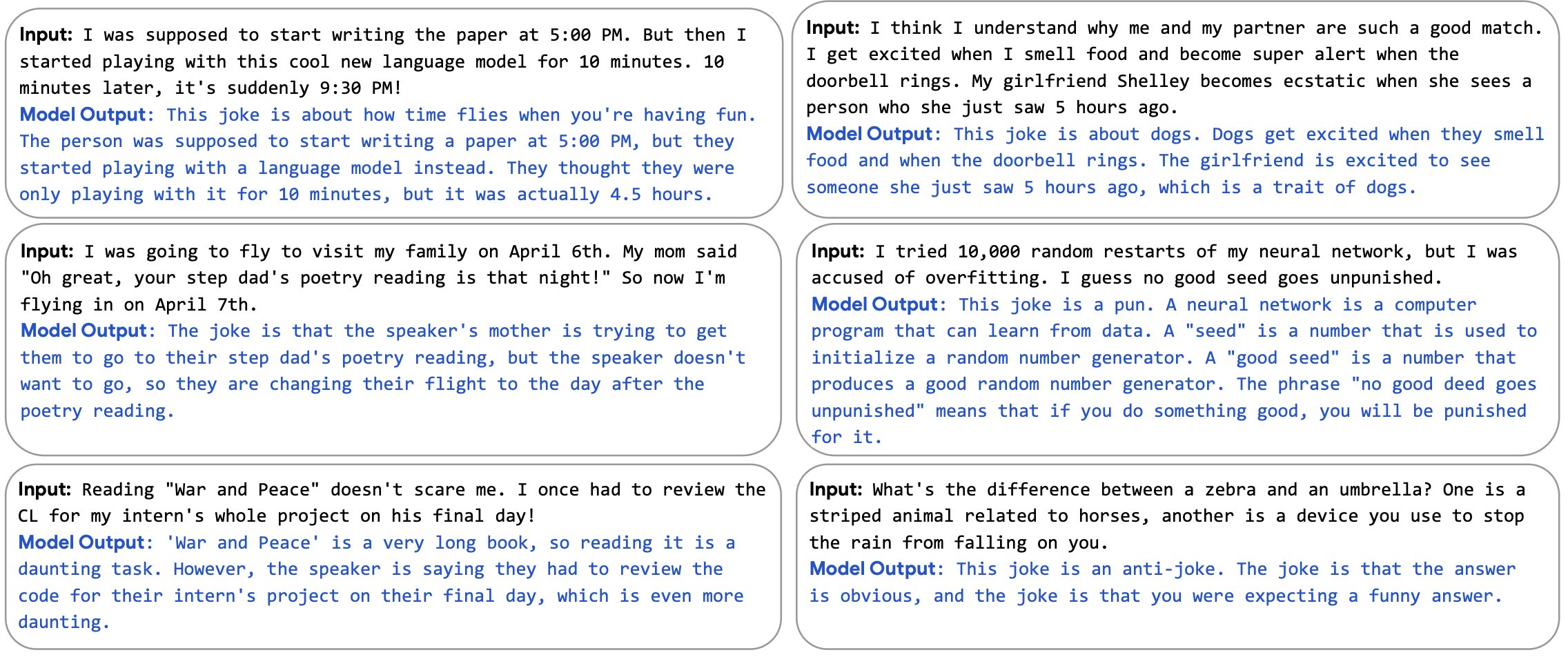

AI explaining jokes

Not to be outdone, Google this week unveiled its Pathways Language Model (PaLM), a massive 540-billion parameter, dense decoder-only Transformer model trained with a new “Pathways system” which allowed Google to efficiently train a single model across multiple clusters of AI accelerators.

Apart from the fact that PaLM puts us over the halfway mark to Nvidia’s trillion-parameter computer intelligence aspirations, Google says PaLM achieves “state-of-the-art” performance across most tasks, and by significant margins in many cases.

“PaLM demonstrates impressive natural language understanding and generation capabilities on several BIG-bench tasks. For example, the model can distinguish cause and effect, understand conceptual combinations in appropriate contexts, and even guess the movie from an emoji,” explained software engineers Sharan Narang and Aakanksha Chowdhery.

Remarkably, PaLM can generate explicit explanations for scenarios that require multi-step logical inference, world knowledge, and deep language understanding. And yes, it turns out that it can provide high-quality explanations for unique jokes not found on the web.

Indeed, a fine-tuned version of PaLM was also able to fix broken C programs until they compile successfully, achieving a compile rate of 82.1% to outperform the prior record of 71.7%. It was also able to solve some grade-school math problems by correctly decomposing multi-step problems into multiple parts.

The incredible achievement aside, it is worth noting that PaLM was partly created to demonstrate Google’s ability to harness thousands of AI processors for a single model.

“PaLM demonstrates the scaling capability of the Pathways system to thousands of accelerators .... Pushing the limits of model scale enables [breakthrough] performance of PaLM across a variety of natural language processing, reasoning, and code tasks,” concluded Narang and Chowdhery.

Clearly, the day is still young for advancements in AI.

Paul Mah is the editor of DSAITrends. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose. You can reach him at [email protected].

Image credit: iStockphoto/Ryzhi

Paul Mah

Paul Mah is the editor of DSAITrends, where he report on the latest developments in data science and AI. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose.