Robot ‘Dog’ Learns To Walk in an Hour

- By DSAITrends editors

- July 20, 2022

Newborn animals born in the wild must learn to walk quickly to avoid predators. Apart from innate spinal cord reflexes to help the animal avoid falling during their initial attempts, they tend to learn relatively quickly. However, the details of how it happens are not well understood.

To explore how animals learn to walk, researchers at the International Max Planck Research School for Intelligent Systems (IMPRS-IS) built a four-legged robot the size of a dog as part of a research study to figure out the details.

Morti learns to walk

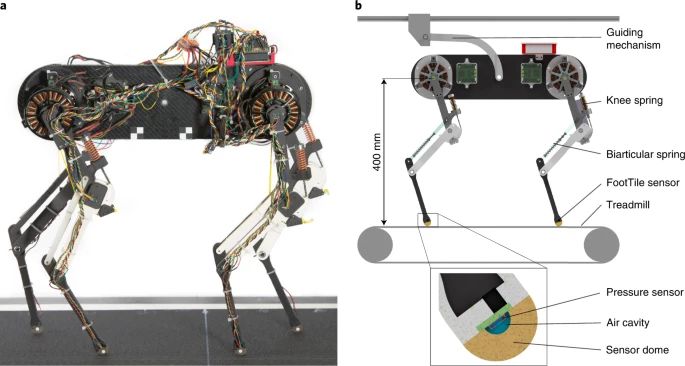

Named Morti, the robot consists of four “biarticular legs” mounted atop a carbon fiber body. Each leg has three segments: femur, shank, and foot. The femur and foot segments are connected by a spring-loaded knee joint to mimic the biarticular muscle-tendon structure.

Morti was placed on a treadmill with a linear rail that allowed body pitch. Sensors were mounted on Morti’s feet to measure ground contact, on top of joint angle sensors, position sensors, and a treadmill speed sensor.

Under the hood, a Bayesian optimization algorithm guides the learning using the foot sensor information matched with target data from a modeled virtual spinal cord – which runs as a software application on an Intel i7-based computer.

Various optimizations were sequentially deployed on Morti, with performance evaluated by continuously comparing sent and expected sensor information – and adapting motor control patterns in response. The robot started making good use of its leg mechanism after a duration of roughly an hour.

“Our robot is practically 'born' knowing nothing about its leg anatomy or how they work,” said Felix Ruppert, a former doctoral student in the Dynamic Locomotion research group at MPI-IS, who elaborated on how the system worked.

“The CPG [central pattern generator] resembles a built-in automatic walking intelligence that nature provides and that we have transferred to the robot. The computer produces signals that control the legs' motors, and the robot initially walks and stumbles. Data flows back from the sensors to the virtual spinal cord where sensor and CPG data are compared,” he said.

“If the sensor data does not match the expected data, the learning algorithm changes the walking behavior until the robot walks well, and without stumbling. Changing the CPG output while keeping reflexes active and monitoring the robot stumbling is a core part of the learning process.”

You can read about the research in “Learning plastic matching of robot dynamics in closed-loop central pattern generators” published in the journal Nature Machine Intelligence here.

Image credit: iStockphoto/PRADEEP87