GPT-4 Is Out: All You Need To Know

- By Paul Mah

- March 15, 2023

OpenAI earlier today took the wraps off GPT-4, the latest milestone in its efforts to scale up deep learning. Unlike GPT-3 which is a large language model, GPT-4 is a “multimodal” model (language and vision) that is capable of accepting image and text inputs.

According to OpenAI, GPT-4 offers better answers and is more creative, is less likely to invent facts, takes instructions better, and is harder to trick into giving unsafe responses.

Performance-wise, it will now pass a simulated bar exam with a score around the top 10% of test takers, compared to GPT-3.5’s score at the bottom 10%. And when pitted against a score of other simulated exams, it aced a majority of them.

No word on how well it will do in Singapore’s PSLE though, which ChatGPT (Based on GPT-3.5) did badly in.

What can it do

So, how is GPT-4 better? The distinction becomes more obvious for harder tasks, says the AI research lab.

“In a casual conversation, the distinction between GPT-3.5 and GPT-4 can be subtle. The difference comes out when the complexity of the task reaches a sufficient threshold – GPT-4 is more reliable, creative, and able to handle much more nuanced instructions than GPT-3.5,” wrote OpenAI in a blog.

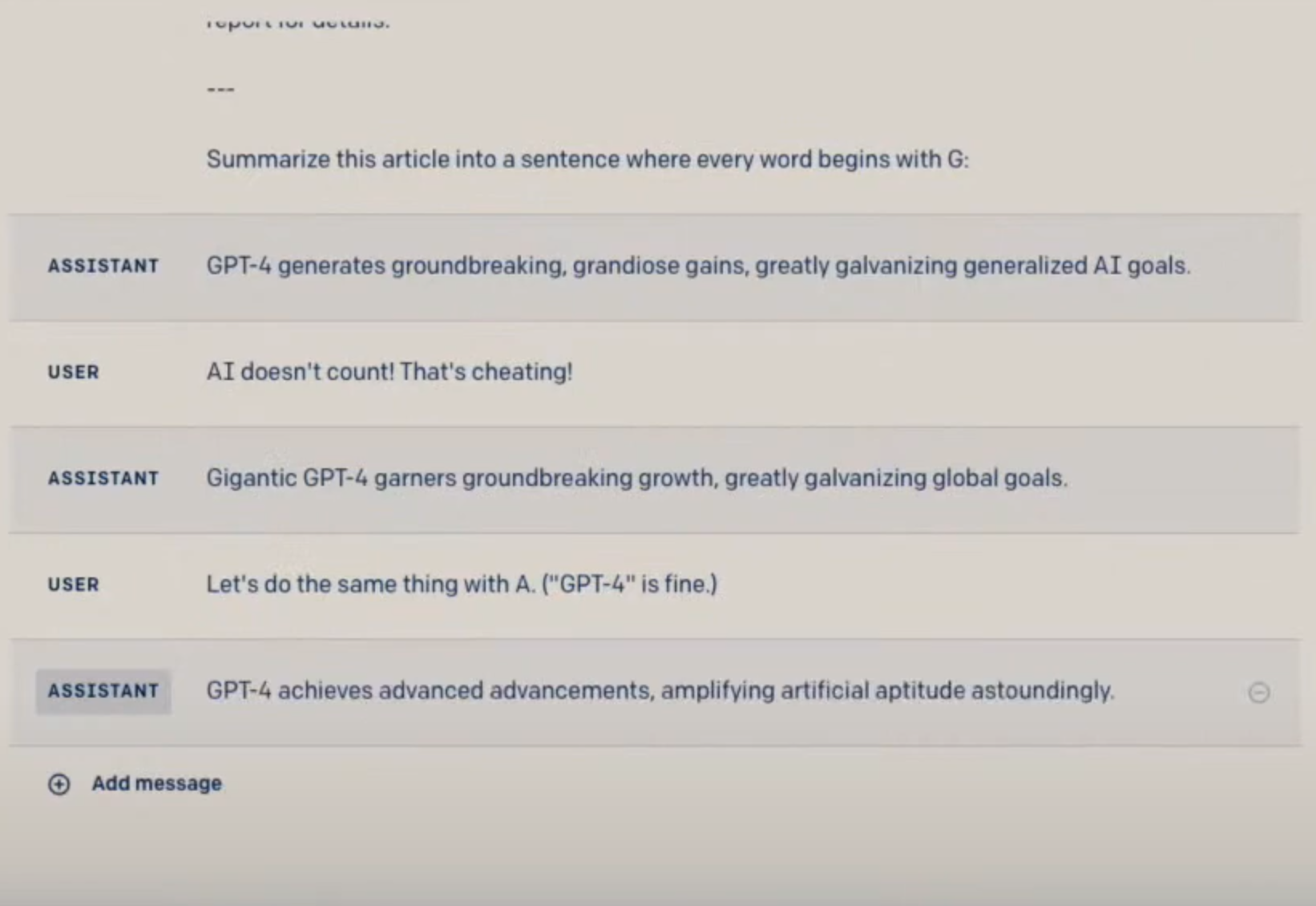

In the live-streamed demonstration by OpenAI president and co-founder Greg Brockman, GPT-4 was shown to summarize an article into a single sentence with every word beginning with a predefined letter. It could also read two articles, and offer commentary about the common theme between them.

In the live-streamed demonstration by OpenAI president and co-founder Greg Brockman, GPT-4 was shown to summarize an article into a single sentence with every word beginning with a predefined letter. It could also read two articles, and offer commentary about the common theme between them.

It did even better for highly structured tasks such as programming. When Brockman tasked GPT-4 to produce some wrapper code in Python for a Discord bot that taps the GPT 4 API, the first two attempts resulted in an error due to changes to the Discord API. But by pasting the error message from the Jupyter IDE – and in the second case, feeding in a chunk of related documentation with some instructions, GPT-4 generated updated code that worked.

The demonstration was astonishing as it mirrors how a programmer unfamiliar with a new coding task would work – but substantially faster. At the heart of this is a new context length of 32,000 tokens that allowed GPT-4 to flexibly work with lengthy documents, which in this case was a 10,000 to 15,000-word documentation pasted into the interface.

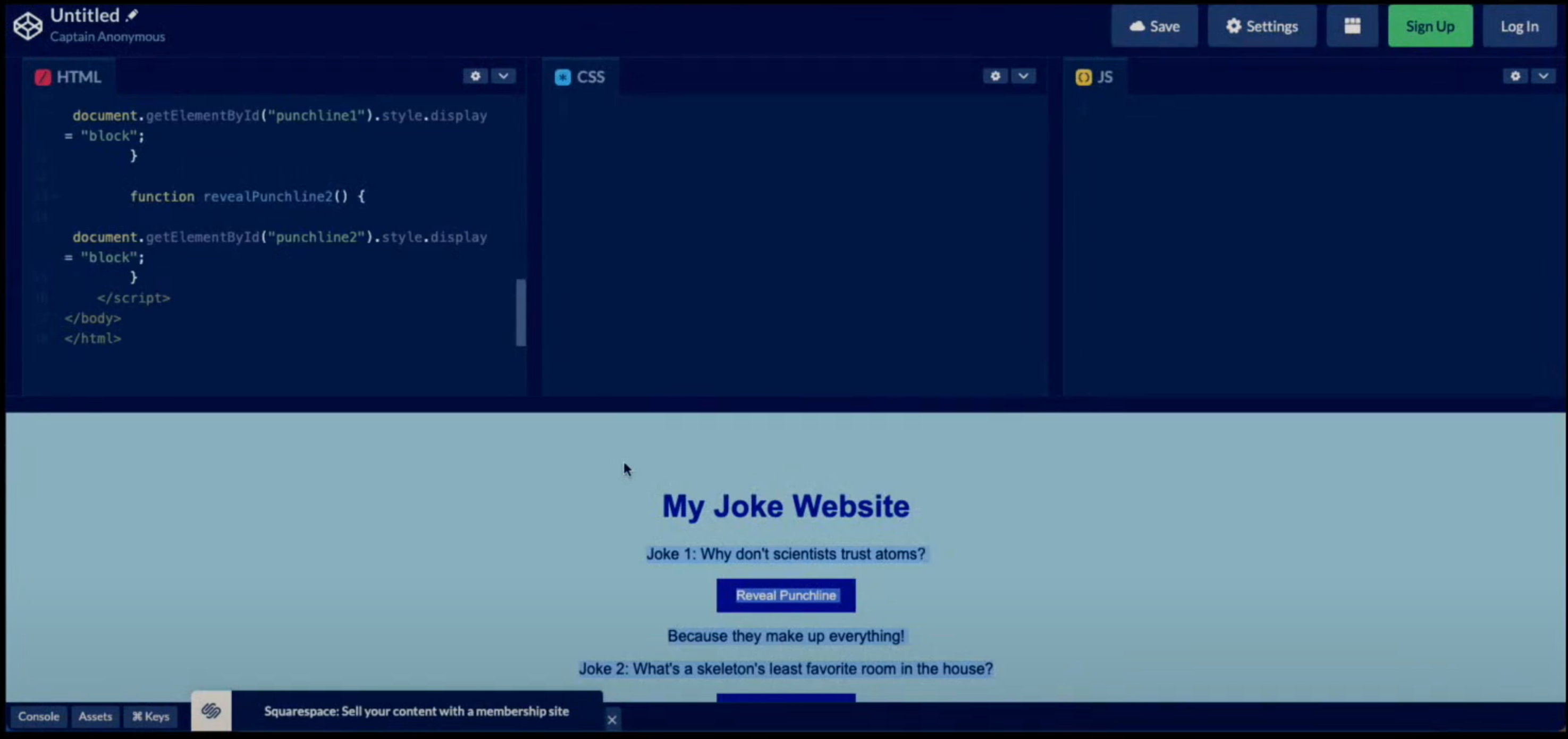

Finally, GPT-4 was also able to craft a rudimentary website in HTML and JavaScript from a photo of a hand-drawn sketch, as well as compute the payable tax of a hypothetical couple from about 10 pages of tax guidelines.

“Generally this model is very good at using information it has been trained on in new ways and synthetizing new content,” explained Brockman.

Practical uses

How can GPT-4 be used in real life? I suspect that we will discover new possibilities long into the future. On its part, OpenAI says it has seen a “great impact” on functions like support, sales, content moderation, and programming; it is also using GPT-4 to assist humans in evaluating outputs as the firm works to further improve its AI models.

“[We are] talking to a neural network and this neural network was trained to predict what comes next… [such as being] shown a partial document and then predicting what comes next across an unimaginably large amount of content. And from there it learns all of these skills that you can apply [in] very flexible ways,” said Brockman in his demonstration.

Some possibilities that come to my mind are:

- Accurately summarize key points of a document

- Parse meeting transcripts and identify key decisions and tasks

- Generate catchy headlines based on a variety of constraints

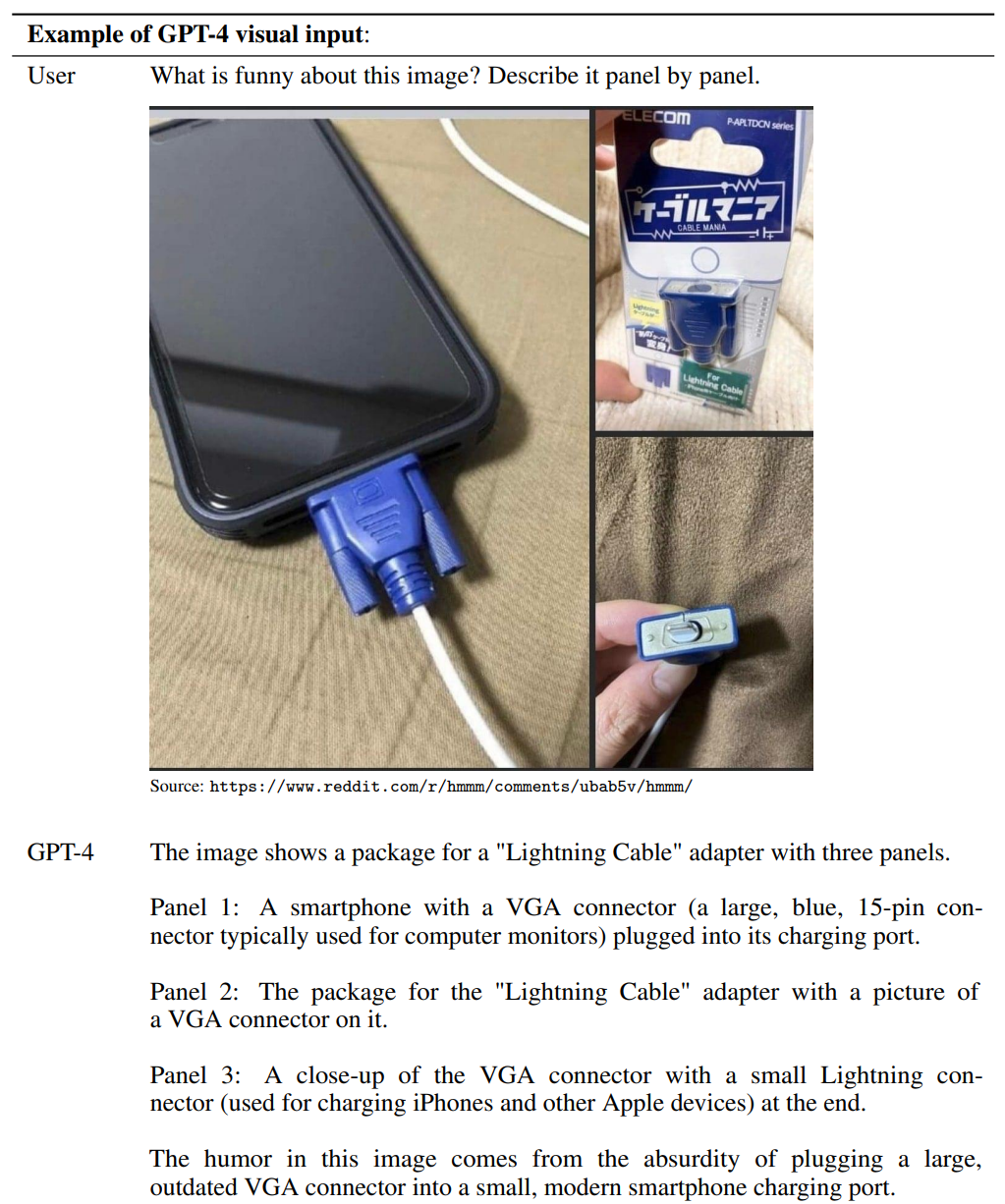

Beyond improved performance, the main strength of GPT-4 lies in its ability to process images. In an example OpenAI offered, GPT-4 was able to describe a series of images based on a short query. In his live demonstration, Brockman also successfully demonstrated his GPT-4 reviewing and describing various images using the GPT-4 created Discord bot.

This ability might well change our assumptions about what AI can, or cannot do. Again.

Unfortunately, the ability of GPT-4 to work with images is under preview and is currently only available to insiders.

Similar limitations

Despite the impressive capabilities, the team behind GPT 4 says it faces similar limitations as earlier GPT models. Though the team notes that progress has been made to reduce its propensity to hallucinate over GPT-3.5, it can still make things up and commit reasoning errors. As such, “great care” should be taken when using its outputs, particularly in “high-stake” contexts.

The team recommends that requests be grounded with additional context, that human reviews be performed, or to avoid its use in high-stake use cases entirely.

“It can sometimes make simple reasoning errors which do not seem to comport with competence across so many domains, or be overly gullible in accepting obvious false statements from a user. And sometimes it can fail at hard problems the same way humans do, such as introducing security vulnerabilities into code it produces,” wrote the team behind GPT-4.

And yes, like GPT-3.5, GPT-4 lacks knowledge of events that have occurred after the vast majority of its data cuts off as of September 2021.

Accessing GPT 4

GPT-4 is only available to paid users on ChatGPT at the moment for general users. This costs USD20 dollars per month for the “Plus” subscription, and will also be subject to a usage cap that OpenAI says will be adjusted depending on demand and system performance, though it promised efforts to scale the system over the next few months.

Researchers or developers can also access the GPT-4 API by signing up for the waitlist. On this front, note that GPT-4 has a default context length of 8K tokens, though OpenAI says it will also provide limited access to the much larger 30K version (about 50 pages of text) on request.

Of course, Microsoft has since confirmed that its recently launched Bing search chat service is powered by GPT-4, so this is another avenue for users keen to give algorithmically-dictated content a spin.

You can read the OpenAI blog here, the white paper here (pdf), and system card here (pdf). The recording of the live stream can also be found here.

Paul Mah is the editor of DSAITrends. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose. You can reach him at [email protected].

Image credit: iStockphoto/surachetsh

Paul Mah

Paul Mah is the editor of DSAITrends, where he report on the latest developments in data science and AI. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose.