Can We Train AI for a Higher Purpose?

- By Yatish Rajawat

- April 10, 2023

The post-Chat GPT3 world is in awe of artificial intelligence. This awe is akin to a society bedazzled by the powerful arc lights of a magical show. The magic of AI is everywhere and in everything almost all the time. Suddenly governments, bureaucrats, technocrats, technologists, and even the social sector are discussing this “magic” that can solve many things.

If there is hype, there is money to be made, and the VC funds are sniffing for deals. Nothing wrong with following the money if it creates real entrepreneurs with exciting new apps and allows more people to grow their income.

But with some technologies, there is more that can be done. There are a few who are not dazzled, a few who don't follow the lustre of money, and a few who can see the potential of a new use of intelligence to solve society's most vexing problems.

Magically conjuring lots of money is one thing; magically addressing the most vexing problems is another. The issues of poverty, agriculture, livelihood, and education outcomes should also be the focus of AI engineers.

These are not subjects being discussed in the conference rooms of big tech companies. But a small cohort of committed Indians who have used open source to solve at scale did that on a Saturday afternoon in Bengaluru on April 1, 2023. The date is important as the debate on AI has to shift from amazement to purpose.

Nandan Nilekani, Pramod Varma, Shankar Maruwada, Vivek Raghavan and many others from EkStep Foundation brought together engineers, bureaucrats, and policy researchers to frame problem sets, suggest possible AI-based solutions and prioritize the ones which will have the maximum impact.

AI can certainly impact an entire population. And more than just writing a better email or improving search results, AI can help a student tackle a question in Science in Kannada by not revealing the answer but guiding him or her toward one.

Nandan Nilekani set the tone for how AI can and should be leveraged for the public good. He felt that it should be a community effort because only then can solutions across an entire population be created.

He pointed out that there is now a playbook of sorts as to what is needed to build a solution that will finally be able to create an impact. For instance, improving the outcomes of student learning or their ability to practice mathematics which is always poor, as per the ASER report.

Pramod Verma pointed out that this is the role AI has to take to solve societal problems that, till now, were unsolvable.

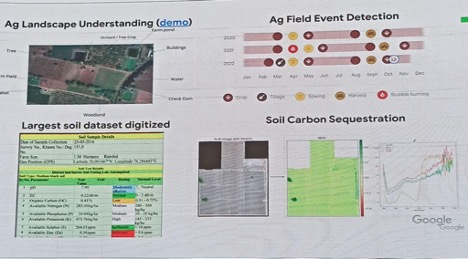

For example, he asked whether training a Super Intelligence can create a customizable farming advisory nationwide. This includes addressing variables such as crops, seeds, and fertilizers based on the farm's climate, water, carbon, nutrient, and soil conditions. The permutation of these variables can run into millions, and AI can do the computation and provide the solution in the native language of the farmer.

AI can also curate the solution for this problem in multiple Indian languages, both as voice and text. Google has captured some of these farm elements, as is visible from the picture below.

Of course, the gap here is the data or the large language model to train AI to customize the solution for each farm. But as the Google slide above shows, it has been able to digitize soil data, and hopefully, over time, this data, once combined with Google geo landscape data, will get better. But the crucial question is where this data will reside.

This is where the crucial departure happens from how this cohort in Bengaluru is thinking about problems and how big tech is thinking about AI.

AI is only as good as the data it gets. Pramod Verma and Nandan Nilekan both stressed the importance of India being a data-rich country. This has happened because India has taken the lead in creating digital public goods, which has led to the digital transformation happening right down to the last man in the chain. Antyodaya in India has happened through the digital public good (DPG) route.

AI leaps across the language barrier

There is a severe divide between Indian and English language learners. Most technology development uses English as a default interface, information, and communication language.

This language barrier creates bias and division in all AI applications, tools, and technologies. Interestingly the solution also lies with machine learning, and big tech has been looking to bridge this gap.

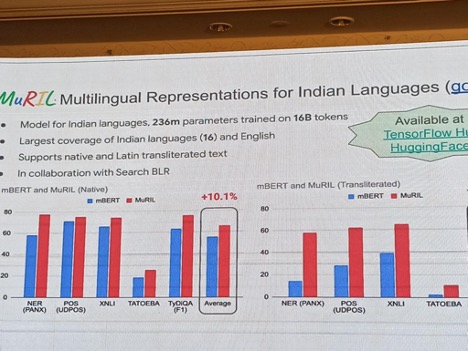

Google’s Manish Gupta talked about MorNI and Muril (Multilingual Representation for Indian Languages) that Google has been working on to bridge the Indian language. Muril, as explained by Google, is intended to be used for various downstream NLP tasks for Indian languages. This model is also trained on transliterated data, a phenomenon commonly observed in the Indian context. This model is not expected to perform well on languages other than the ones used in pretraining, i.e., 17 Indian languages.

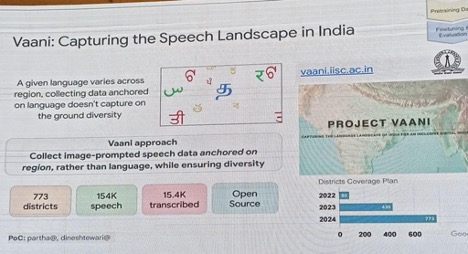

Project Vaani will be implemented jointly by the Indian Institute of Sciences (IISc) and Google to gather speech data from across India to create an AI-based language model that can understand diverse Indian languages and dialects. Pramod Verma, the architect of Aadhar and UPI, explained that AI4Bharat has already made Vaani and Bhashini a DPG to solve the language barrier.

The language barrier is not just a divide in learning; it also introduces an access issue. Everyone can't type text into small smartphones and access internet-based applications. So, voice-based access in multiple Indian languages can democratize nationwide access.

There is also the issue where language itself is not easily accessible. For instance, the legalese adopted by lawyers, judges, and even regulators or bureaucrats can be challenging to understand. While a translation is possible, it is not enough to explain the issue. For instance, in case of a property dispute, what part of the law will be applicable, and can that be transliterated into an Indian language by an AI engine so that an illiterate person can understand it? Large language models that accomplish this would be close to magic.

The magic can also be applied to make natural language be used for programming. Arun Singh, a visionary analyst, pointed out that this process is now closer to reality with the integration of Wolfram GPT with ChaptGPT. In a way, it will be possible for a liberal art student to create a program in plain English without knowing any programming language. To learn more about how this is possible, listen to this Wolfram interview.

This is also a language barrier between computer engineers and non-engineers. The ability to write a programming language is limited to engineers. As a result, computing that powers the advance of digital technology cannot be fully leveraged by the non-computer engineer. ChatGPT offers a way to remove or reduce this inherent bias of relying heavily on programmers for AI programs to create AI magic.

AI will disrupt many industries and sectors in a data-rich country like India. It is equally crucial that we do not create AI engines that will make Indians unemployable. AI4India needs a policy framework that addresses this challenge.

Yatish Rajawat is the founder of Centre Innovation in Public Policy, a think tank based in Delhi. His area of research includes everything digital affecting policy, people, and the biosphere. Feedback or contact at [email protected].

Image credit: iStockphoto/guirong hao; Charts: Yatish Rajawat