AMD’s MI300X AI Accelerator Is Shipping

- By Paul Mah

- February 07, 2024

AMD has started shipping its Instinct MI300X GPUs for AI and high-performance computing (HPC) applications, according to a report on Tom’s Hardware.

AMD's Instinct MI300X is the big brother of AMD’s Instinct MI300A, the industry's first data center accelerated processing unit that comes with both general-purpose x86 CPU cores and CDNA 3-based processors for AI and HPC workloads.

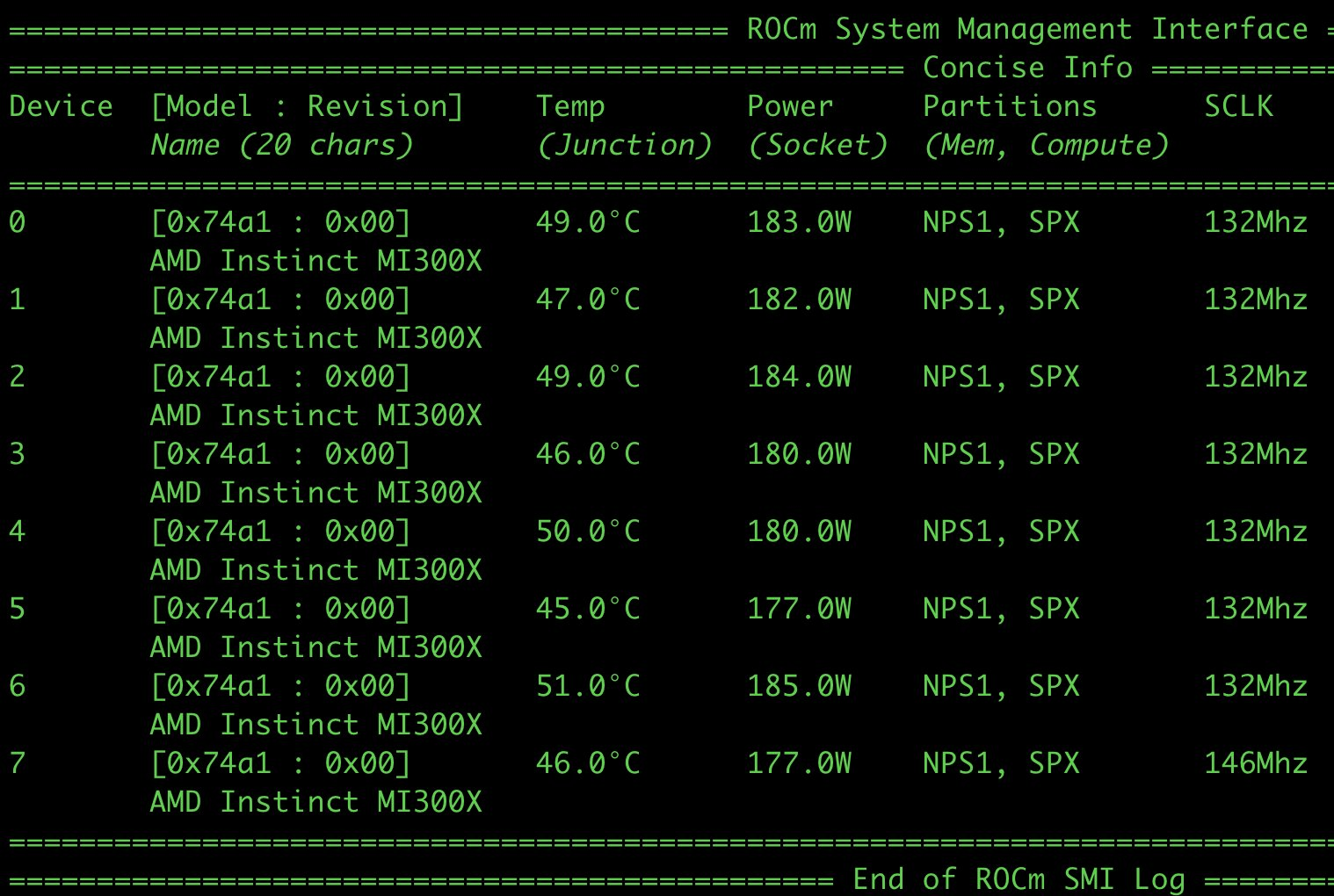

In a tweet, Sharon Zhou, CEO of LaminiAI, noted that servers equipped with as many as eight AMD MI300X accelerators are currently live in production. LaminiAI offers a self-serve AI platform that AI engineers can use to run LLMs.

“The first AMD MI300X live in production,” Zhou wrote. “Like freshly baked bread, 8x MI300X is online if you're building on open LLMs and you're blocked on compute, [let me know]. Everyone should have access to this wizard technology called LLMs; that is to say, the next batch of LaminiAI LLM pods are here.”

Fastest AI chip

The MI300X is a stand-alone accelerator that is used in a two-exaflop supercomputer code-named El Capitan at the Lawrence Livermore National Laboratory. AMD claims its MI300X is the fastest AI chip in the world and that it beats Nvidia’s H100 and even the upcoming H200 GPUs.

MI300X has 153 billion transistors and is built using 3D packaging with 5- and 6-nanometer processes. Under the hood, it comes with 304 GPU compute units, 192GB of HBM3 memory, and 5.3 TB/s of memory bandwidth. This lets it deliver 163.4 teraflops of peak FP32 performance and 81.7 teraflops of peak FP64 performance.

On its part, the Nvidia H100 SXM delivers 68 teraflops of peak FP32 and 34 teraflops of FP64 performance. The newer H100 NVL model closes that gap with 134 teraflops of FP32 performance and 68 teraflops of FP64 performance.

“It’s the highest performance accelerator in the world for generative AI,” said Lisa Su, AMD’s CEO, when launching the MI300X accelerator.

“If you look at MI300X, we made a very conscious decision to add more flexibility, more memory capacity, and more bandwidth. What that translates to is 2.4 times more memory capacity and 1.6 times more memory bandwidth than the competition,” said Su.

Image credit: Twitter/@realSharonZhou

Paul Mah

Paul Mah is the editor of DSAITrends, where he report on the latest developments in data science and AI. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose.