Overcoming Barriers To AI Medical Scans

- By Paul Mah

- August 11, 2021

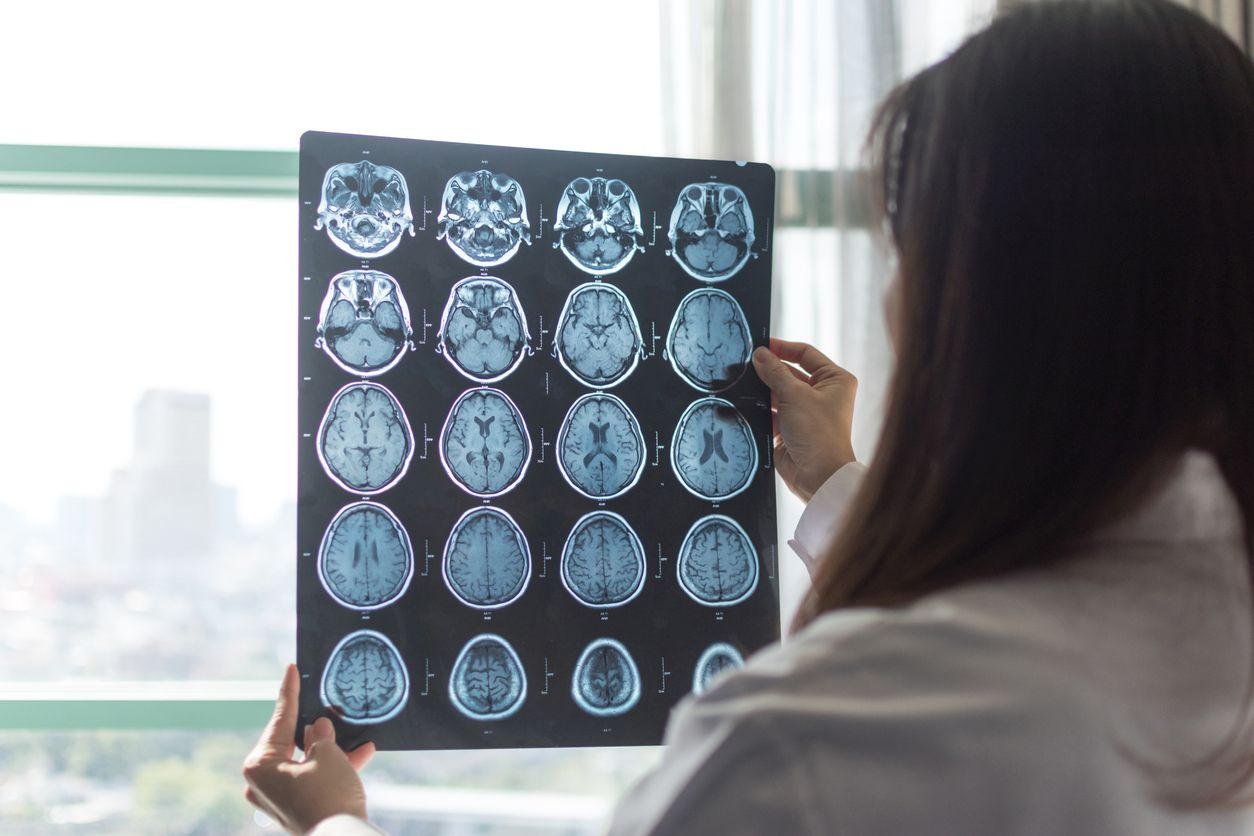

Medical imaging is an important research field and seems perfectly fitted for the use of artificial intelligence models. After all, experts are not always available, and the ability to diagnose medical conditions accurately and more quickly is bound to save lives. Crucially, advances in this area can also push the envelope for AI use in other areas.

Dataset size is not everything

To speed up the adoption of AI in medical imaging, Gael Varoquaux, a research director at the National Institute for Research in Computer Science and Automation (Inria) and Dr Veronika Cheplygina of ITU Copenhagen authored a paper that identified some of the challenges slowing down the progress of the field.

According to the researchers, one such challenge is dataset bias, which can occur if the training data was acquired with a different type of scanner. This was demonstrated in chest X-rays, imaging procedures, or in scenarios where the data source might not appropriately represent the range of possible patients and disease manifestations for the image analysis task.

Indeed, the availability of datasets can unduly influence the type of medical imaging being worked on, says the researchers. This can potentially divert attention away from other unsolved areas. Finally, comparing performance to a baseline is not always meaningful depending on the parameters of the baseline, and might serve to create the illusion of progress.

Data volume isn’t everything

And having a large amount of data isn’t everything, the researchers say, echoing remarks made by Andrew Ng, the co-founder of Google Brain.

“There is a staggering amount of research on machine learning for medical images… [but] this growth does not inherently lead to clinical progress,” wrote the authors, who noted that an increase in data size did not come with better diagnostic accuracy.

What’s more, a higher volume of research (and resulting data) could be due to academic incentives rather than the needs of clinicians and patients, they suggested. Fortunately, there is evidence that studies published later show improvements for larger sample sizes.

The researchers offered some strategies to overcome the challenges they identified. This includes separating all test data from the start to prevent data leakage, ensuring adequate data to represent the diversity of patients and disease heterogeneity, and strong baselines that properly reflect current machine learning research advances on a given topic, among others.

Real-world hiccups

Finally, even a good AI model doesn’t necessarily translate into real-life environments. Operational issues and limitations of on-the-ground equipment or healthcare professionals must also be taken into consideration.

As reported in an MIT Technology Review report last year, a Google Health team was able to leverage AI to search for signs of diabetic retinopathy. But while it worked extremely well in the lab, it turns out that real-world use requires more than just accuracy.

The AI solution was deployed in Thailand where there are insufficient retinal specialists to meet the country’s annual goal to screen most of the population for signs of the condition. However, the system started rejecting a significant number of scans outright.

Upon investigation, it turned out that these scans were deemed to be of unacceptable quality, mostly due to poor lighting conditions. Poor connectivity in some regions led to slow uploads, resulting in a protracted process and unhappy patients.

The story did have its share of successes though: Such as a nurse who practically screened a thousand patients all on her own.

AI promises a future of superior and almost instant clinical diagnosis in the medical field. But challenges involving quality datasets, bias, and operational implementation must be resolved first.

Paul Mah is the editor of DSAITrends. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose. You can reach him at [email protected].

Image credit: iStockphoto/Pornpak Khunatorn

Paul Mah

Paul Mah is the editor of DSAITrends, where he report on the latest developments in data science and AI. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose.