When AI Is Used for Evil

- By Paul Mah

- April 13, 2022

From more digital transformation, autonomous vehicles, to agriculture, AI is making inroads into practically every industry. Indeed, the total AI investment reached USD77.5 billion in 2021 and continues to increase in leaps and bounds.

But as the threshold to advanced machine learning is further lowered with a new generation of no-code tools and affordable access to high-performance hardware, can AI be used for anything other than good?

40,000 possible chemical weapons in 6 hours

For example, could machine learning be used to develop chemical substances designed to kill or maim?

A paper published in Nature Machine Intelligence outlined the chilling possibility of how AI can potentially be misused to generate deadly pathogens or novel chemical weapons.

The group of scientists who first posed this question typically spend their time training machine learning (ML) models for drug discovery. By creating ML models that weed out toxicity, they were able to find safe, new drugs to heal and cure. Unfortunately, it turns out that reversing the process to seek out toxicity was all it took to create chemical weapons.

The results were chilling: In less than 6 hours, the team with an in-house server generated some 40,000 molecules that scored within a specified threshold of deadliness – which in this case was set to somewhere between VX and Novichok classes of nerve agents. This included various known chemical warfare agents and new molecules that were predicted to be even more toxic.

To be clear, actually creating the compounds is a very different proposition from discovering them. And it is highly unlikely that the compounds to make a nerve agent can be obtained without alerting law enforcement.

The authors acknowledged that there are “hundreds” of commercial companies offering chemical synthesis, however, and the area is poorly regulated. Moreover, what if models were trained to design toxic molecules based on innocuous precursors that are both widely used and easy to obtain?

Type-a-deepfake

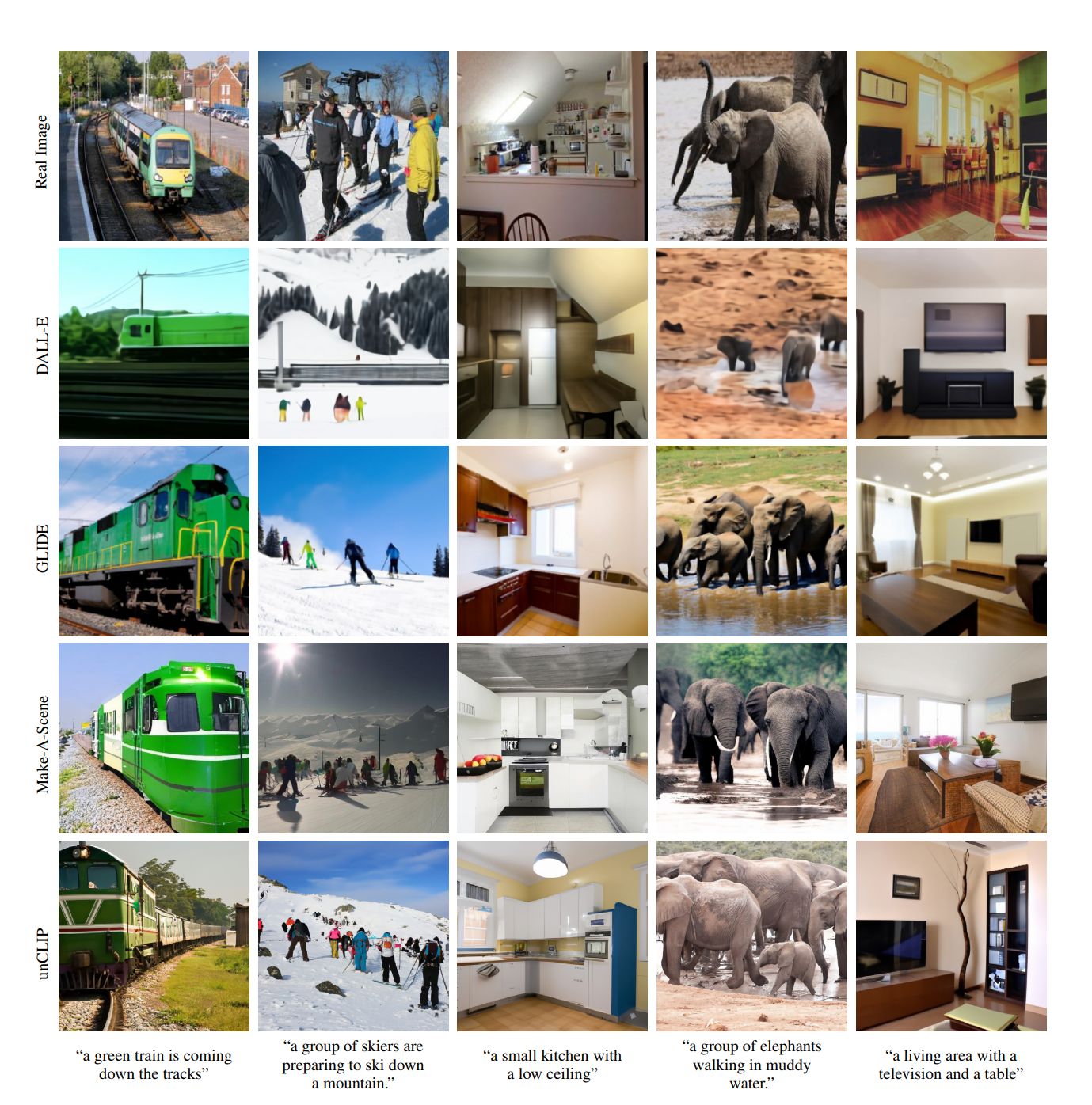

Last month, we talked about how AI-synthesized faces are now indistinguishable from the faces of real people. Well, OpenAI late last week took things further when it released the second iteration of its image creation tool that can take a description and automatically generate a highly realistic image.

DALL-E 2 can create original, realistic images and art from a text description. Think in terms of combining concepts and attributes, such as “an astronaut riding a horse in a photorealistic style” or “a corgi on a beach”.

Realistic edits can also be made to an existing image using natural language. Elements that are added and removed will automatically take considerations such as shadows, reflections, and textures into account – no Photoshop required. Details about the research and various examples can be accessed here.

It doesn’t take a genius to realize the disinformation, propaganda, or scam potential of such a tool in the wrong hands.

For instance, Singapore is currently facing a plague of scammers with victims collectively losing almost USD1 billion in 2021 alone. Scammers with the ability to generate deepfakes on-the-fly will only exacerbate the problem.

For now, OpenAI has taken steps to limit the capabilities of DALL-E 2 and restricting who can access it. But given how today’s cutting-edge is tomorrow’s normal, it wouldn’t surprise me that similar image generation capabilities will make its way into the mainstream over the next few years.

Are your thoughts really your own?

Finally, can AI algorithms disrupt our ability to think? In a contributed piece published on VentureBeat, Gary Grossman, a senior vice president at Edelman and global lead at the Edelman AI Center of Excellence lamented his use of driving apps despite being good at directions.

“Perhaps we should be paying more attention to this not-so-subtle shift in our reliance on AI-powered apps. We already know they diminish our privacy. And if they also diminish our human agency, that could have serious consequences,” he wrote.

As we trust a growing array of apps and cruise through life on ‘autopilot’ while digesting news articles and social media feeds without questioning, Grossman asked, might we lose the ability to form our opinions and develop interests of our own?

He cited Jacob Ward in his book “The Loop”, which in Chapter 9 offers a chilling glimpse of a future human society shaped by AI.

“The data is sampled, the results are analyzed, a shrunken list of choices is offered, and we choose again, continuing the cycle… By using AI to make choices for us, we will wind up reprogramming our brains and our society,” wrote Ward.

Ward further cautioned: “Leaning on AI to choose and even make art, or music, or comedy will wind up shaping our taste in it, just as it will shape social policy, where we live, the jobs we get.”

As we close off the week with Good Friday, I think it is worth mulling the trajectory of AI and what it bodes for us all. Are we still in time to establish proper boundaries and governance around the use of AI?

Paul Mah is the editor of DSAITrends. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose. You can reach him at [email protected].

Image credit: iStockphoto/AlessandroPhoto

Paul Mah

Paul Mah is the editor of DSAITrends, where he report on the latest developments in data science and AI. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose.